From the start, I’ve intentionally used AI as a core part of my workflow.This isn’t accidental—I’ve been trying to figure out what AI actually means for me as a developer.

I spent some time reading and watching talks about AI. One that stood out was a TED Talk by Raymond Fu. He talks about how software engineering is changing, and the main takeaway for me was simple:

Who I am as a developer has to change.

And I’m actively trying to do that.

The Shift: From Developer → Solutions Engineer

I’ve started taking on bigger projects.

Before AI, progress felt different. Wins were smaller but meaningful—you’d learn a concept, struggle through syntax, and eventually get something working. That was the growth.

Now? I can ask AI to do something—and it just… does it.

So what does that mean?

Do we not need developers anymore?

Kind of… yeah. At least not in the traditional sense.

There’s still a need for deeper technical work—low-level systems, custom libraries, edge cases—but a lot of what we used to do is being abstracted away.

What I’m starting to call a **solutions engineer** (not sure if that’s the official term, but it fits) is someone who:

- Identifies problems

- Designs systems to solve them

- Uses AI to handle the low-level implementation

A traditional software engineer focuses on code and systems.Now, the code is mostly handled—you still need to understand it, but fluency isn’t the bottleneck anymore.

Raymond’s point really stuck with me:We don’t get to just be the quiet programmer anymore.

Now you need to:

- Think like a designer

- Understand systems end-to-end

- Communicate clearly

- Work across multiple domains

You’re no longer uniquely skilled in a stack—you’re uniquely skilled in building solutions.

Project Prompt

With all of that in mind, I wanted to actually practice this shift.

I use Obsidian a lot—it’s a markdown-based note system with a really powerful graph view. I like it because I work on a lot of things at once, and being able to connect ideas helps me stay grounded.

But it takes time.

So the idea was:

What if I could just talk to an agent in natural language, and it figures out where everything goes?

Instead of manually organizing notes, I want:

- Input → natural language

- Output → structured, connected knowledge

Targeted Features

- Natural Language Input: Just write normally

- Automated Categorization: AI decides where it belongs

- Graph Integration: Notes connect automatically

- Conversational Search: Ask instead of digging

- Adaptive Learning: Gets better over time

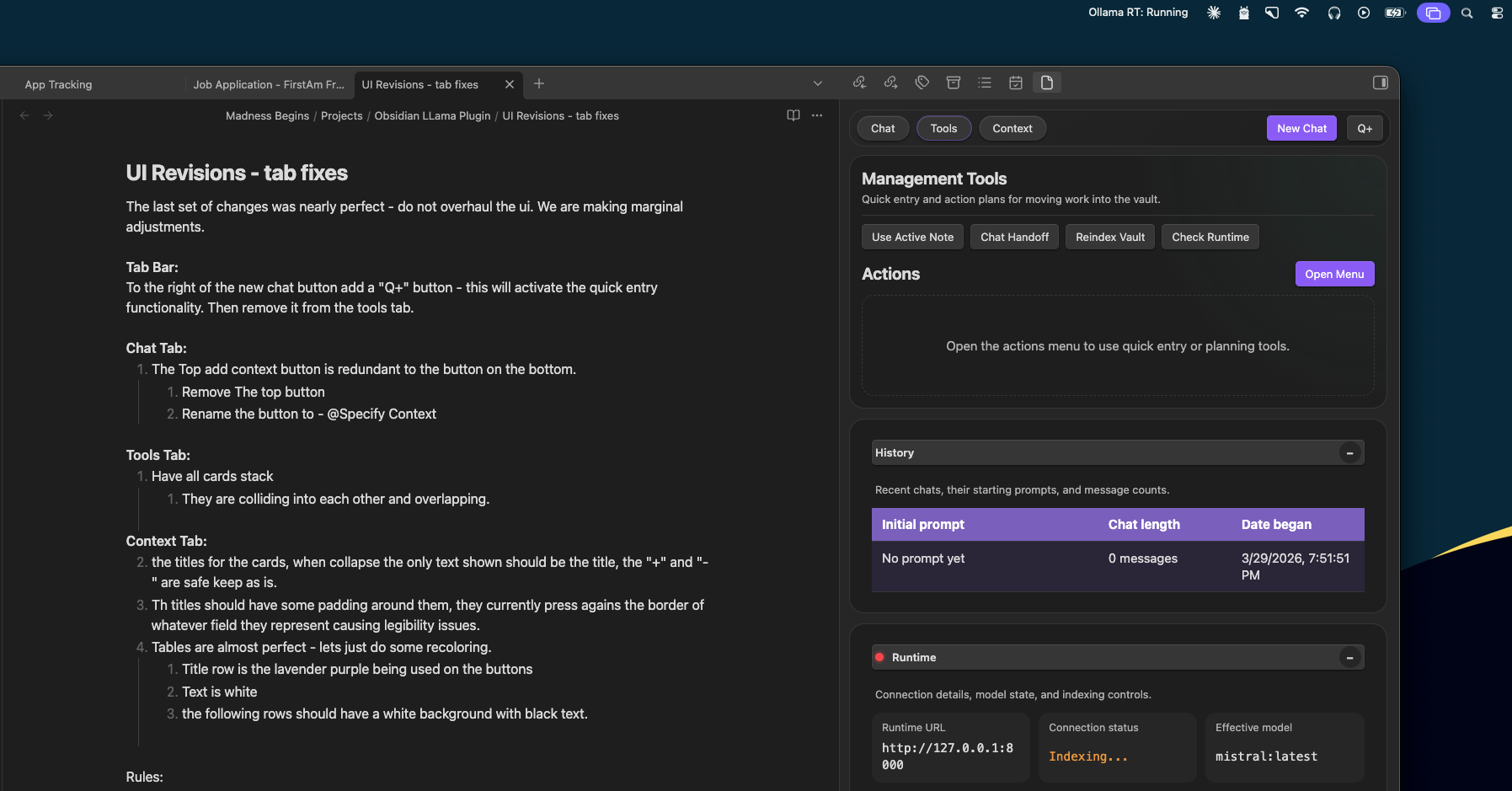

I opened up Zed and created a basic structure.

Two main parts:

- Runtime environment for AI

- Plugin interface for Obsidian

Then I created a Markdown file: Goals & Rules

This was basically my first real prompt into Codex:

- What I’m building

- Folder structure

- Tech stack

- Naming conventions

- MVP features

Looking back, I’d write it differently now—but at the time, it was enough.

And here’s the weird part:

I wrote it → cleaned it with ChatGPT → gave it to Codex →…and it built the plugin.

That felt… wrong.

Like it wasn’t really my work.

But at the same time, I had a functioning plugin.Not useful—but working.

That led to a pretty real thought:

What’s the point of learning software engineering if this is where things are going?

If someone can just ask for a system and get it…

What’s my role?

I pushed that aside for now—because the goal of this project is to learn how to work with AI, not fight it.

I kept going.

Writing more.UI ideas, runtime behavior, back and forth.

All in Markdown.

That became my “language.”

It’s readable, structured, and works well when feeding into AI tools.

And things started working—but not perfectly.

What I started learning:

- AI doesn’t infer intent as well as you think

- You need to define rules explicitly

- If you’re vague, it will go off track

Sometimes it would rewrite things in ways that looked correct—but introduced subtle issues.

That was the first real signal:

We still need engineers—not for syntax, but for control and direction.

At this point, I had:

- A plugin running

- Ollama with a local model

- A runtime bridging everything together

(Not pretty—but functional.)

I chose Ollama for two reasons:

- No API costs

- This is personal data—I want it local

So I started testing it.

I asked:

“What can you tell me about the vault?”

The response was useless.

It either knew nothing or gave random notes.

After a few iterations, I realized the problem:

The AI had no context.

The “Aha” Moment

You can’t just ask AI to “figure it out.”It won’t.

It needs structured context.

This is where engineering comes back—but in a different form.

Not debugging loops or memory leaks—but:

- Defining systems

- Designing context

- Structuring information

One thing I did that helped a lot:

I forced the AI to document everything it did as a guide.

Not because I’d redo it manually—but because I needed visibility into what it was doing.

That gave me:

- Understanding

- Traceability

- A way to learn from it

I started asking:

What does it mean for AI to “know” something?

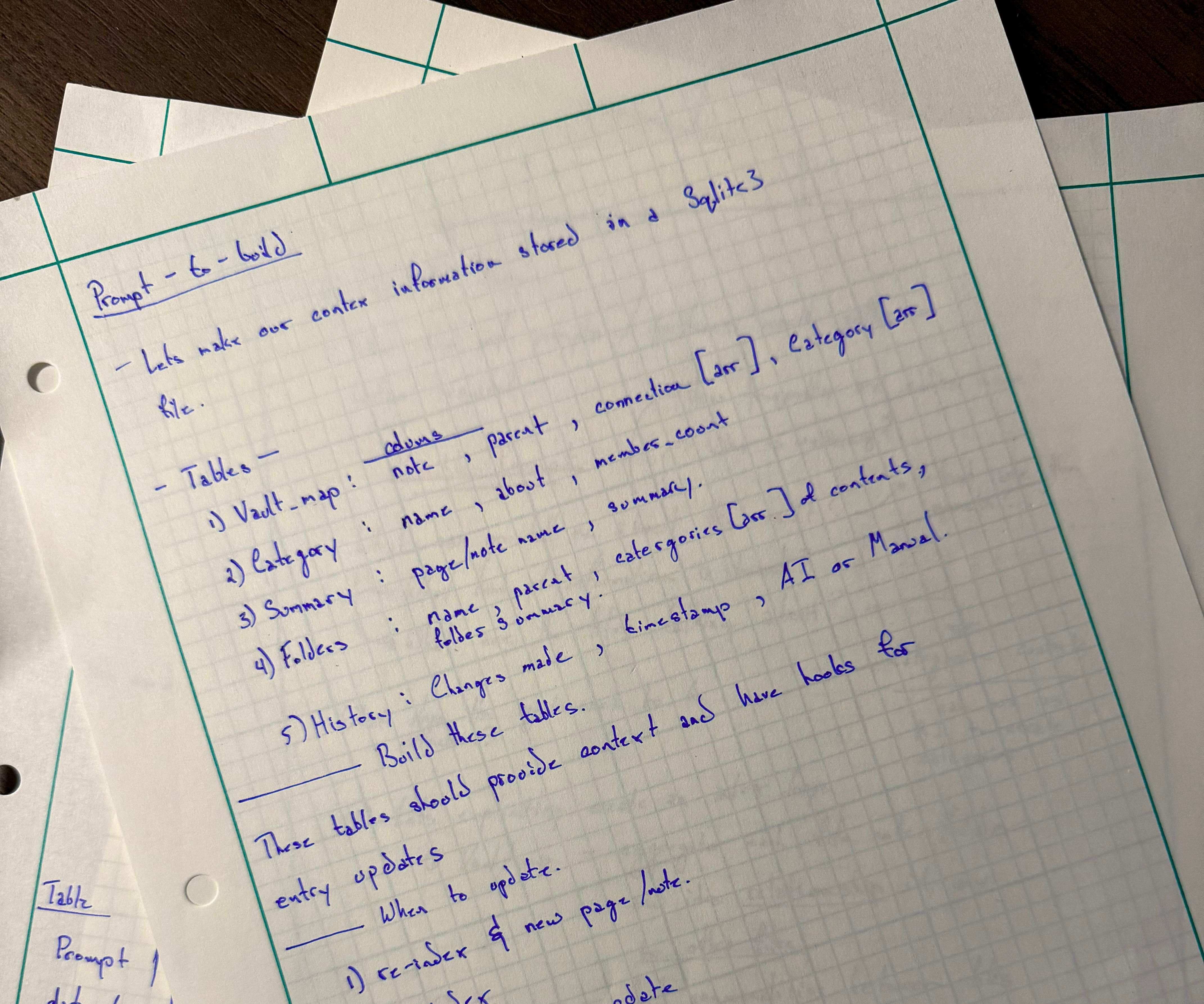

I came up with a few requirements:

- It should know the file structure

- It should know categories

- It should have summaries

- It should track changes

Originally, this was stored as JSON.

That didn’t scale.

So I moved it to a SQLite database.

Now instead of static text, I had:

- Structured context

- Relationships

- Queryable data

Now the AI actually had something to work with.

This is where things got real again.

Example:I asked the AI to generate categories.

It:

- Treated hex codes as tags

- Misinterpreted projects

- Confused contexts completely

Why?

Because I didn’t define the rules clearly enough.

So I had to:

- Normalize data

- Define categorization rules

- Shift from literal → contextual understanding

One prompt that helped:

Generate 2–3 word summaries, and reuse existing categories when possible.

It’s still messy—but it’s improving.-

At a high level:

- Vault gets indexed

- Data is structured into tables

- Context is generated

- Context is fed into the model

- Model responds with awareness of the system

Now when I ask:“What is this vault about?

It actually answers.

Not perfectly—but correctly.

This project is ongoing.

It’s:

- On GitHub

- Functional

- Not user-ready

To run it, you’d need:

- Local LLM setup

- Plugin installation

- Manual configuration

But it works.

And more importantly—it’s teaching me a lot.

The biggest shift for me:

I’m not writing code first anymore.I’m writing Markdown prompts.

Structured, verbose, intentional prompts:

- Rules

- Expectations

- Context

- Tasks

As those improve:

- Less supervision is needed

- Fewer corrections

- Better results

That feels like the direction things are going.